Architecture Issues in DRAM Devices and Systems: Overview

Since the early 1980s, we have enjoyed an exponential increase in microprocessor performance of roughly

50% per year [Hennessy & Patterson 1996]. This exponential growth rate has enabled many technologies that

we take for granted today, including affordable personal computing and networks fast enough to support

high-bandwidth interactive use (e.g. the WWW). In addition, the rapid turnover from high performance to

obsolescence ensures a generous supply of microprocessors that are not competitive in the desktop arena but

are quite adequate for embedded-system needs. These processors cannot justify high-performance price

premiums and are instead sold for mere dollars apiece, thus enabling high-performance embedded applications

such as digital cellular phones, CAT scanners, telephone switches, and high-resolution video games.

However, the phenomenal pace of improved system performance is expected to come to a grinding halt

within the next decade [Wulf & McKee 1995, Przybylski 1996]: the memory and processor components of

computer systems are both increasing in performance, but they are doing so at significantly different rates.

Within the first few years of the 21st century, it is expected that processors will be so far ahead of DRAM

systems in performance that the improvement rate will be dominated by memory at a 7% performance

increase per year. At the end of a five-year period, a 7% growth curve versus a 50% growth curve yields a

factor of five difference. Over a ten-year period, the difference is a factor of twenty-nine.

Processor performance has grown at such a tremendous rate because it draws from two sources of

improvements: both in technology (i.e. advances in circuit and device physics) and in architecture (i.e. design

choices such as pipelined execution, use of caching, exploitation of instruction-level parallelism, etc.). By

contrast, DRAM performance has relied upon technology improvements alone; there have been no

corresponding architecture-level mechanisms employed at the DRAM level to improve performance. There

has been an enormous amount of research on memory systems--numerous studies have been published that

redesign the CPU to tolerate long memory latencies: examples include instruction and data prefetching in

both hardware and software, out-of-order execution, lock-up free caches, speculative loads, data prediction,

speculative execution, multi-threaded execution, memory-request reordering at the controller level, etc. More

than a hundred papers in the last three years of architecture research have looked at the problem in terms of

what can be done on the CPU side to tolerate or reduce memory latency. However, this has all been at the

microprocessor level, not at the DRAM level.

It is imperative that we investigate architecture issues in DRAM devices and DRAM systems. This is the

only way to avoid an ever-increasing gap between microprocessor performance and DRAM performance.

This project encompasses

a series of experiments that explore the behavior of the primary memory system, including the

effect of concurrency at the DRAM level, the effect of multiple channels between the CPU and the

DRAM system, the effect of multi-banking and interleaving at different granularities, transfer-width

optimizations, the performance of embedded-DRAM organizations with a DSP execution model,

and the effect of bank conflicts on execution time.

For example, the following are absolutely fundamental questions, but they are all open research issues in

the field of DRAM device architectures and system architectures:

- What is the performance effect of supporting concurrent accesses at the DRAM system, and to what

degree can this feature be exploited by modern CPU designs?

- What is the effect of having multiple channels open to the DRAM system? Should they be ganged

together to increase bandwidth and decrease end-to-end latency, or should they be independent?

- What can be done at the architecture level to decrease critical-word latency?

- What is the effect of banking, both externally and internally? What is the optimal degree, given a

particular application? What is the correlation with program behavior, if any?

- What is the effect of interleaving? What are the optimal degree and optimal granularity, given a

particular application? What is the correlation with program behavior, if any?

- For burst-mode DRAMs such as SDRAM and ESDRAM, what is the optimal burst size to

accommodate both concurrency and fast end-to-end latency? How does this interact with the degrees

of banking and interleaving?

- What are the tradeoffs between bus width and bus speed in the performance, cost, and power

consumption domains?

- What are the effects of bank conflicts, and how easily can they be avoided by program reorganization

at compile-time and/or run-time?

- Are there management policies of the row buffers that can increase row-buffer hit rate to the point

that the row buffers form a highly effective cache?

- For what applications does embedded DRAM make sense? What is the suitability of embedded

DRAM for a DSP-style execution model?

This project is supported by an NSF CAREER award to answer these questions.

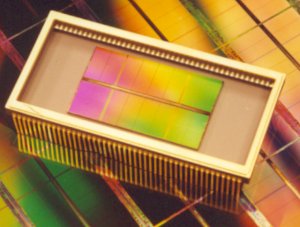

Architecture Issues in DRAM Devices and Systems: A Tutorial

This is a 150-slide tutorial (printed two slides per page) on DRAMs, from the basics (DRAM organization,

operation, timing, etc.) to the advanced basics (electrical loading, packaging, clock distribution at

high speeds, etc.). It includes performance comparisons of architectures such as Fast Page Mode (FPM),

Extended Data Out (EDO), Synchronous DRAM (SDRAM), Rambus, Direct Rambus, Dual Data Rate SDRAM (DDR), etc.

It contains performance comparisons of different system-level parameters such as bus widths, bus speeds,

channel organizations, banking, etc. Lastly, it takes a brief look at embedded DRAM as it applies to DSP.

If you have any questions, please contact me.

System-Level Design Issues

We have studied organization-level parameters associated with the design of the primary

memory system--the DRAM system beneath the lowest level of the cache hierarchy. These parameters are orthogonal to

architecture-level parameters such as DRAM core speed, bus arbitration protocol, etc. and include bus width, bus speed,

number of independent channels, degree of banking, read burst width, write burst width, etc; this study presents the

effective cross-product of varying each of these parameters independently. The simulator is based on SimpleScalar 3.0a and

models a fast (simulated as 2GHz), highly aggressive out-of-order uniprocessor. The interface to the primary memory system is

fully non-blocking, supporting up to 32 outstanding misses at both the level-1 and level-2 caches.

Our simulations show the following: (a) the choice of primary memory-system organization is critical, as it can effect total

execution time by a factor of 3x for a constant CPU organization and DRAM speed; (b) the most important factors in the

performance of the primary memory system are the channel speed (bus cycle time) and the granularity of data access, the burst

width-each of these can independently affect total execution time by a factor of 2x; (c) for small bursts, multiple narrow

independent channels to the memory system exhibit better performance than a single wide channel; for large bursts, channel

cycle time is the most important factor; (d) the degree of DRAM multi-banking plays a secondary role in its impact on total

execution time; (e) the optimal burst width tends to be high (large enough to fetch an L2 cache block in 2 bursts) and scales

with the block size of the level 2 cache; and (f) the memory queue sizes can be extremely large, due to the bursty nature of

references to the primary memory system and the promotion of reads ahead of writes. Among other things, we conclude that the

scheduling of the memory bus is the primary bottleneck. Small bursts cause major

backups in the memory system, because the time to transfer a burst is on the

order of the bus turnaround overhead --- and because the asymmetric nature

of read requests vs. write requests makes it inefficient to interleave the

two. For larger bursts, the turnaround time is amortized, and interleaving

reads with writes is not much different than interleaving read pairs or write

pairs, because the time to hold the data bus is extremely long.

Details can be found in the following papers:

Where We Are in the DDR2 Specification (Spring 2000)

This study is a performance examination of the DDR2 DRAM architecture and the

proposed cache-enhanced variants. These preliminary looks are based upon ongoing collaboration

between the authors and the Joint Electronic Device Engineering Council (JEDEC) Low Latency

DRAM Working Group, a working group within the JEDEC 42.3 Future DRAM Task Group. This

Task Group is responsible for developing the DDR2 standard. The goal of the Low Latency DRAM

Working Group is the creation of a single cache-enhanced (i.e. low-latency) architecture based upon

this same interface.

There are a number of proposals for reducing the average access time of DRAM devices,

most of which involve the addition of SRAM to the DRAM device. As DDR2 is viewed as a future

standard, these proposals are frequently applied to a DDR2 interface device. For the same reasons it

is advantageous to have a single DDR2 specification, it is similarly beneficial to have a single low-latency

specification. The authors are involved in ongoing research to evaluate which enhancements

to the baseline DDR2 devices will yield lower average latency, and for what type of applications. To

provide context, experimental results will be compared against those for systems utilizing PC100

SDRAM, DDR133 SDRAM, and Direct Rambus (DRDRAM).

This work is just starting to produce performance data. Initial results show performance

improvements for low-latency devices that are significant, but less so than a generational change in

DRAM interface. It is also apparent that there are at least two classifications of applications: 1) those

that saturate the memory bus, for which performance is dependent upon the potential bandwidth and

bus utilization of the system; and 2) those that do not contain the access parallelism to fully utilize the

memory bus, and for which performance is dependent upon the latency of the average primary

memory access.

Details can be found in the following paper:

-

"DDR2 and low-latency variants."

Brian Davis, Trevor Mudge, Bruce Jacob, and Vinodh Cuppu.

Proc. Memory Wall Workshop, held in conjunction with the 27th International Symposium on Computer Architecture (ISCA'00).

Vancouver BC, Canada, May 2000.

State of the DRAM Union

Copy of an email I recently sent out regarding the state of

affairs in the DRAM world today (spring 2000):

As has been pointed out by many in the literature, DRAMs are a commodity

and are therefore subject to the pressures of price. It is not surprising,

then, that nearly all the benefits of improved technology are aimed at

improving density (thus cost), and that all successful (note: commercially

successful) performance enhancements to DRAM architectures over the last

decade or two have been minor tweaks to the core. The easiest, adding page

mode, perhaps provided the largest impact on cost/performance, as it required

virtually no new hardware. EDO DRAM added a small latch after the column

muxes and, by virtue of the DRAM being able to perform precharge sooner,

improved performance over FPM DRAM significantly (roughly 30% according to

our studies) -- it was thus widely adopted. ESDRAM moves that latch to the

other side of the column mux, where it has to latch an entire row of data,

thus increasing its size (and cost) noticeably. Though our studies show that

ESDRAM provides another significant boost in performance (roughly 15% over

SDRAM), it has not been widely adopted. Clearly, cost (in the die area

and perhaps licensing agreement?) is the primary factor.

Looking back at the improvements of the last decade or two, it has been far

easier (cheaper) to improve DRAM bandwidth than latency -- bandwidth

improvements have been achieved through relatively straightforward tricks

and reorganization of what hardware is already in place. For example:

exploiting the sense amps as a cache to achieve page mode -- no new hardware

required, significant bandwidth improvements reached. Another example: EDO's

better overlap of data transfer with column access through the addition of a

small output latch -- little hardware required, significant bandwidth

improvements reached. Another: burst mode SDRAM, where the data packets are

shoved out of the DRAM pretty much as fast as the device can handle -- requires

small control modifications and a small register to hold the burst length but

improves bandwidth while simplifying memory controller design. Until recently,

most of these bandwidth improvements resulted in lower latencies as well,

because we tend to grab data in large chunks (i.e. to fill a level-2 cache

line). However, modern (well, actually, decade-old) CPUs have gotten better

at waiting for data -- they request data critical-word-first and stall the

requesting instruction only until the critical word arrives -- meanwhile, other

instructions continue merrily on their way, perhaps making additional requests

of the DRAM system. Therefore, we recently reached the point where bandwidth

improvements no longer reduce latency. Now, we have to actually make the DRAM

core faster, which cannot be done with simple tricks.

The main attempts to improve DRAM core latency have been to reduce the length

of the word lines and/or bit lines. It takes time to drive long word lines

(on the order of 10ns, I have been told), so reducing their length has the

potential to reduce row access time dramatically. This can be done by

fragmenting the DRAM array into many small banks (as in the MoSys design),

or by driving only a portion of the word lines at a time (as in FCRAM -- in

essence, putting a portion of the column mux before the row access). Other

attempts have been to add levels of cache to the DRAM itself -- for example,

virtual channel DRAM (VCDRAM) places an associative cache for DRAM pages

(or subsets thereof) on the DRAM device. This takes advantage of the low

access time to an entire DRAM row that is available only on-chip. Any hit in

the cache obviates a row access and therefore improves access time. Though

"cached DRAMs" like this have been offered in the past, none have become

commercially viable. One theory is that caches placed on

the DRAM are fundamentally no better than caches off-chip and may simply

serve to make the DRAM more expensive.

Another predictable effect of DRAM being a commodity is that companies will try

to invent architectures that distinguish themselves in some fashion from the

offerings around them, so as to attract niche markets and command a higher price

for those markets. I think this is one of the reasons we have seen such a

profusion of (largely incompatible) DRAM architectures lately. I think that some of

these architectures are in fact succeeding -- for example, I have heard that

Nintendo will use the MoSys DRAM in their next video game, which should prove

interesting.

Then there are even more radical departures, such as Rambus and the ill-fated

Synchronous Link DRAM (SLDRAM). Like many of the recent networking architectures

in which bit-serial is favored because extremely high clock rates are possible,

these reduce the width of the memory bus in favor of fast bus cycle times. Our

earliest studies showed that the performance difference is negligible -- systems

with roughly equivalent bandwidth exhibited roughly equivalent performance, and the

only architecture to stand out was the one that made some significant changes to

the DRAM core -- ESDRAM. However, one benefit of these narrow-bus architectures

is that a memory controller can talk to more than one channel at a time, which

would be impractical with a 64-bit or 128-bit bus. This opens up a whole new

realm of possibilities, and the first to explore it will be the newest Compaq

Alpha (connecting to four or more Direct Rambus channels) and the new Sony

Playstation2 (which connects to two Direct Rambus channels). The Playstation2

is available now ... I do not know about the Alpha offering.

Other Publications

-

DRAM Systems Research:

-

DRAM Systems Research: